At A Glance

- Trust in AI depends on accountability, not just accuracy

- Explainability must operate at the decision level

- The biggest failure point is misalignment with real workflows

- Human-in-the-loop models are foundational in compliance

- AI is evolving into decision infrastructure within banking operations

Explainability Is Not a Feature. It Is a Control System

Let’s start where this actually shows up

A large bank. Monday morning.

There are thousands of alerts sitting in the queue. Some are already overdue. Investigators are working through cases, balancing speed with caution, knowing that every decision may be reviewed later.

A transaction gets flagged.

The system says: high risk.

Now someone has to answer a much harder question:

Why?

Not in theory. Not in model terms.

In a way that holds up in an audit, a regulator review, or an internal escalation.

This is where most AI conversations start to fall apart.

Because in compliance, the problem is not detecting risk.

It is defending decisions.

The real gap is not accuracy. It is accountability

AI models in transaction monitoring and fraud detection have improved significantly. Many can reduce noise, surface patterns, and prioritize cases better than traditional rule-based systems.

But that is not what determines adoption.

Compliance leaders are not optimizing for model performance in isolation. They are balancing three pressures at once:

- Volume: rising alert counts and investigator workload

- Risk: the cost of missing true positives

- Scrutiny: the need to justify every decision under review

This is why a model that is “more accurate” is not automatically trusted.

If a decision cannot be explained clearly, it becomes a risk in itself.

That is the gap explainability is meant to close.

Explainability is not about models. It is about decisions

Most discussions around explainability stay at the model level. Feature importance. Transparency. Interpretability.

That is necessary, but not sufficient.

In a compliance workflow, explainability only matters if it answers one question:

Can an investigator clearly understand and justify this decision?

Take a transaction alert.

A useful system does not just assign a score. It shows:

- What patterns triggered the alert

- How the behavior deviates from the customer’s baseline

- Which risk signals mattered most

- What evidence supports the outcome

This shifts the interaction.

From:

“the model flagged this”

To:

“this was flagged because of these specific factors, and here is the evidence”

That is the difference between output and insight.

human oversight

Where trust actually breaks

In most Tier 1 environments, AI does not fail because it performs poorly. It fails because it does not align with how compliance work actually happens.

A few failure points show up repeatedly.

Decisions cannot be reconstructed

Six months later, an auditor asks why a case was cleared.

The investigator is no longer on the case.

The reasoning is not structured.

The system cannot explain itself.

That is exposure.

AI does not match investigator logic

If outputs do not align with how analysts think about risk, the system creates friction.

Investigators spend time validating the AI instead of using it.

Adoption slows down.

Human oversight is unclear

Who decides?

Where does AI stop and human judgment begin?

If that line is not explicit, accountability becomes blurred.

Governance is added too late

Many teams build models first and think about controls later.

By the time governance is introduced, workflows are already misaligned.

Scaling becomes difficult.

The false positive problem is really a trust problem

Reducing false positives is often the starting point for AI in AML.

On paper, the gains can be significant.

In practice, those gains only hold if investigators trust the system.

When explainability is strong:

- False positives are dismissed faster

- True positives are easier to justify

- Supervisors review decisions with confidence

When it is not:

- Every case requires deeper validation

- Time savings disappear

- Risk perception increases

This is why many AI initiatives stall after pilot.

Not because the model failed.

Because the system was not trusted.

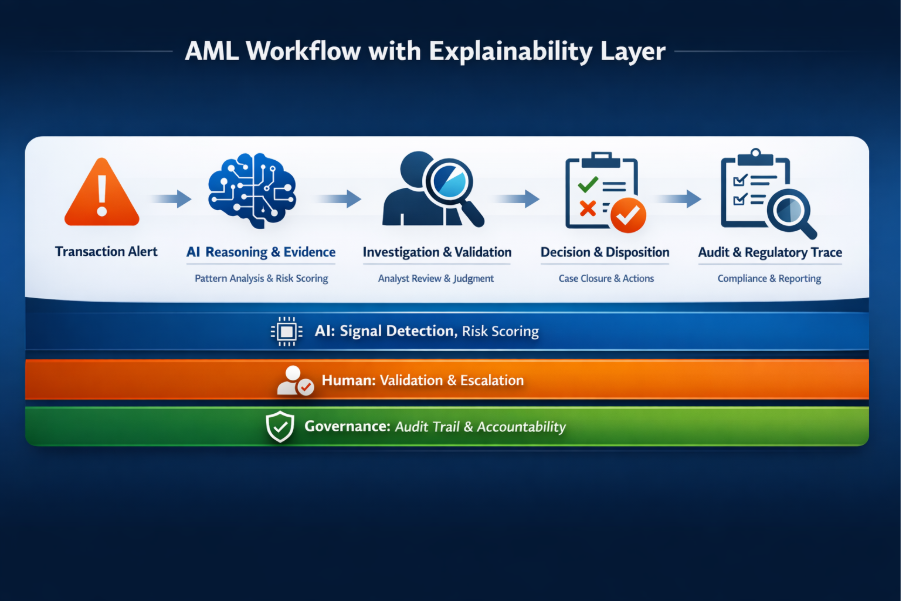

Human-in-the-loop is not a limitation. It is the model

There is still a narrative that AI will fully automate compliance decisions.

That is not what is happening in leading banks.

Instead, the model looks like this:

- AI handles signal generation, pattern detection, and prioritization

- Humans handle validation, judgment, and escalation

- Governance ensures every decision is traceable and defensible

This is not a compromise.

It is how regulated systems operate.

Explainability is what makes this model work, because it allows humans to understand and act on AI outputs in real time.

The real trade-off is different from what most think

There is a common assumption that explainability reduces model performance.

In compliance, that is the wrong question.

The real question is:

Can this decision be defended under scrutiny?

A highly complex, opaque model may perform well in isolation but fail in production.

A transparent, well-structured system is far more likely to be adopted and scaled.

Leading institutions are not optimizing for maximum complexity.

They are optimizing for operational trust.

Where this becomes real

The hardest part of AI in compliance is not building models.

It is embedding them into environments where:

- investigators are under constant pressure

- case volumes are high

- audit expectations are unforgiving

This is where most initiatives slow down.

Bridging this gap requires more than technical capability. It requires alignment between:

- AI outputs and investigator workflows

- decision logic and governance requirements

- system design and audit expectations

At LatentBridge, this is where most of the work sits.

Not in building standalone models, but in embedding AI into live compliance workflows such as transaction monitoring and KYC, where explainability and auditability are built into the system itself.

That includes designing systems where:

- reasoning is surfaced alongside alerts

- decisions are structured and traceable

- audit trails are generated as part of the workflow, not after the fact

The shift is subtle, but important.

From AI as a tool

to AI as part of the decision infrastructure.

So, can AI be trusted?

AI can be trusted in compliance decisions when three conditions are met:

- The reasoning behind decisions is clear and accessible

- Human oversight is embedded within the workflow

- Governance ensures accountability at every stage

Without these, AI remains difficult to scale beyond pilots.

With them, it becomes a reliable part of how modern compliance functions operate. Not replacing judgment but strengthening it.