At A Glance

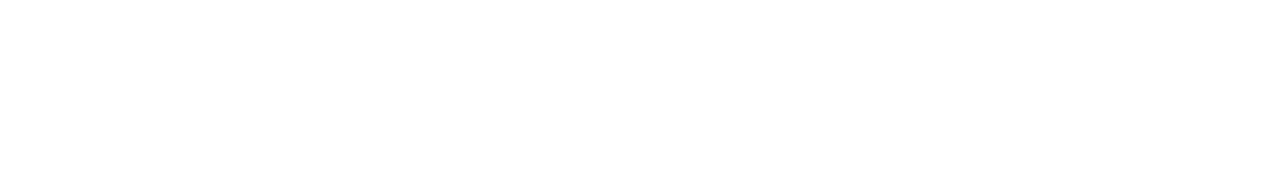

Modern financial crime risk no longer sits neatly within fraud, AML, sanctions, or KYC silos. The same customer behaviour can trigger signals across multiple systems, yet most banks still investigate these risks in parallel rather than in context. This leads to duplicated investigations, inconsistent decisioning, and governance gaps that regulators increasingly scrutinise.

Integrating AI across fraud and AML is not about replacing existing monitoring engines. It is about introducing contextual risk intelligence that connects cross-domain signals, assembles investigation-ready context, and supports consistent, explainable decision-making. When implemented with strong governance and human oversight, this approach reduces investigative friction, improves audit defensibility, and enables financial crime teams to interpret risk more accurately at scale.

For Tier 1 banks operating complex legacy stacks, the strategic shift is moving from siloed model optimisation to unified risk interpretation across fraud, AML, KYC, and sanctions workflows. Institutions that adopt cross-domain AI frameworks are better positioned to handle rising alert volumes, regulatory expectations, and increasingly sophisticated financial crime patterns without compromising control or accountability.

For years, financial crime programmes inside banks have evolved in parallel rather than together. Fraud teams built their own detection logic. AML teams refined transaction monitoring. Sanctions teams focused on screening accuracy. KYC teams improved onboarding controls. Each function became more sophisticated within its own scope, yet the underlying operating model remained fragmented.

This made sense in a slower regulatory and risk environment.

It makes far less sense today.

Financial crime risk no longer appears in neat categories. A single customer can trigger fraud signals, AML anomalies, sanctions exposure, and KYC inconsistencies across different systems, jurisdictions, and timelines. When these signals are assessed in isolation, institutions do not just duplicate effort. They dilute risk intelligence.

The question is no longer whether banks should use AI in fraud or AML.

The real strategic question is whether AI is being integrated across financial crime workflows or deployed as another siloed capability.

That distinction is now becoming operationally material.

The Institutional Reality: Why Fraud and AML Still Operate in Silos

Inside most Tier 1 banks, fraud and AML do not merely use different models. They run on different data pipelines, separate case management systems, and distinct escalation frameworks. Fraud investigations often prioritise immediacy and customer protection, while AML investigations emphasise regulatory defensibility and documentation depth. Both are valid objectives, but they rarely converge operationally.

Legacy vendor stacks reinforce this separation. Fraud monitoring platforms, transaction monitoring systems, sanctions engines, and KYC repositories are frequently procured, implemented, and governed by different teams. Over time, this creates parallel risk views of the same customer, each accurate within its domain but incomplete in aggregate.

The consequence is not just technological fragmentation.

It is fragmented decision-making.

An alert cleared by fraud may still escalate within AML. A high-risk onboarding profile may not be fully visible to transaction monitoring teams. Investigations are repeated, context is rebuilt manually, and analysts spend disproportionate time reconstructing risk narratives instead of assessing them.

This is where integration becomes less of a technology initiative and more of an operating model redesign.

Why Siloed AI Is Quietly Becoming a Governance Risk

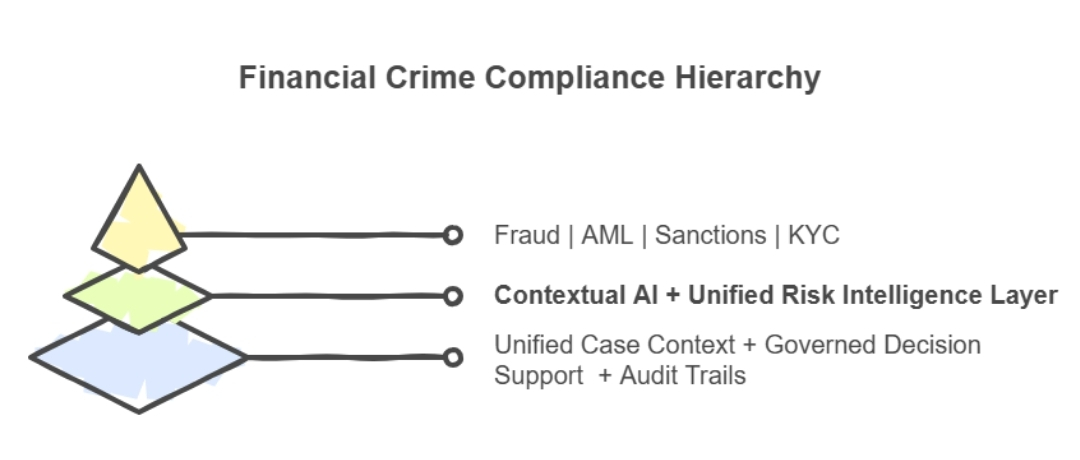

Many institutions have already introduced AI into fraud detection or AML monitoring. Yet deploying AI within silos often amplifies, rather than resolves, fragmentation. Models become more accurate within their own domain, but cross-domain blind spots persist.

Regulators are increasingly attentive to decision consistency across financial crime controls. Supervisory reviews now examine not only whether alerts were handled correctly, but whether risk signals were interpreted coherently across functions. A customer flagged for anomalous transactions, adverse media, and onboarding inconsistencies should not receive three disconnected investigative narratives.

This is where siloed AI creates an unintended governance gap.

Each model optimises locally.

The institution’s risk posture, however, is evaluated globally.

A fragmented AI landscape can therefore lead to defensibility challenges, especially when audit trails reveal that relevant context existed elsewhere in the organisation but was not operationally surfaced during decisioning.

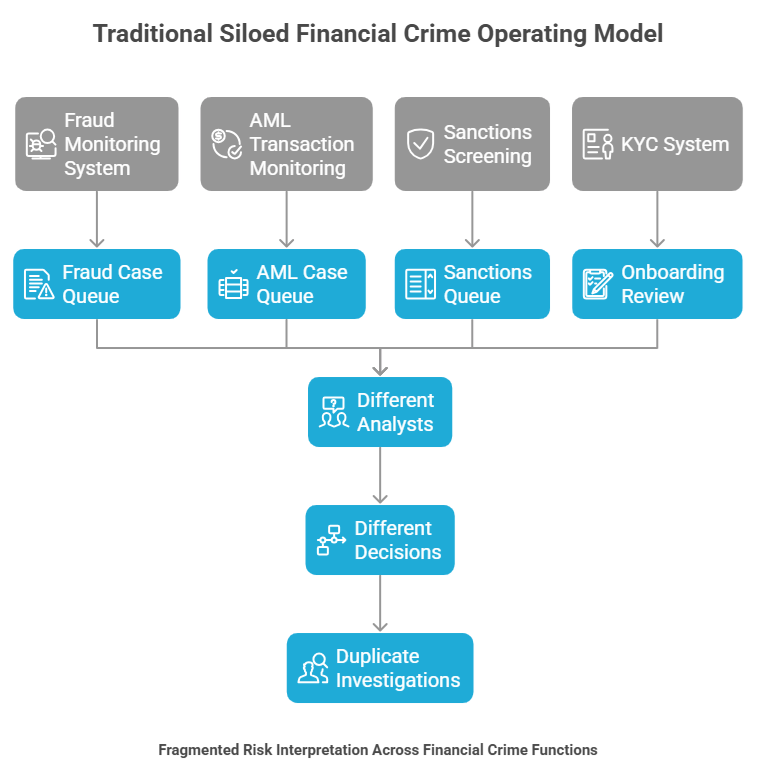

From Functional AI to Cross-Domain Risk Intelligence

Integrating AI across fraud and AML does not mean replacing existing systems. In practice, it means introducing a contextual intelligence layer that connects signals across KYC, transaction monitoring, sanctions screening, and fraud detection workflows.

Instead of analysing alerts as isolated events, AI can assemble a unified risk context. Customer behaviour, historical alerts, onboarding data, jurisdictional exposure, and network linkages can be evaluated together, enabling more proportionate and explainable investigations.

This shift is subtle but powerful.

Risk is no longer scored solely on static rules or single-channel triggers.

It is interpreted in context.

For example, a transaction anomaly evaluated alongside recent onboarding inconsistencies and adverse media signals produces a very different risk narrative than the same anomaly assessed in isolation. Analysts receive structured context rather than fragmented data points, which materially improves both speed and judgment quality.

A Practitioner Insight: The Real Duplication Is Not Alerts, But Investigations

One of the least discussed operational inefficiencies in large banks is investigative duplication. The same customer risk is often assessed separately by fraud and AML teams, each rebuilding context from different systems, documentation standards, and policy lenses.

This does not occur because teams lack expertise.

It occurs because systems do not share structured intelligence.

AI integration, when implemented correctly, reduces this duplication by assembling investigation-ready context at the point of alert triage. Analysts across fraud and AML functions begin with the same evidence base, the same customer narrative, and the same historical signals. Decision variance reduces, documentation improves, and quality assurance becomes more proactive than corrective.

This is not merely an efficiency gain.

It is an institutional memory gain.

The Role of Contextual Risk Scoring in Unified Financial Crime Operations

Traditional risk scoring frameworks rely heavily on thresholds, weightings, and static classifications. While these remain important, they struggle to reflect how modern financial crime evolves across time and channels.

Contextual AI introduces dynamic risk interpretation. It evaluates behavioural patterns, relational linkages, temporal activity shifts, and cross-domain signals rather than isolated triggers. A customer’s risk posture is therefore continuously interpreted rather than periodically recalculated.

This is particularly valuable in complex environments where fraud tactics, money laundering typologies, and sanctions exposure intersect. Contextual scoring helps reduce false positives without weakening controls, because decisions are grounded in richer evidence rather than narrower rule sets.

Financial crime teams often describe the impact as operational “calm”.

Fewer redundant escalations.

Fewer context gaps.

More defensible decisions.

Governance and Explainability: Non-Negotiables in AI Integration

For compliance leaders, integration must never come at the expense of governance. AI across fraud and AML must remain explainable, auditable, and firmly aligned with regulatory expectations. Decision-support models should provide clear reasoning trails, policy references, and structured evidence bundles that can withstand internal audit and supervisory scrutiny.

Human oversight remains central.

AI should structure investigations, not autonomously conclude them.

When governance is embedded into workflows rather than layered on top, audit readiness becomes a natural byproduct of operations rather than a reactive exercise. This is especially critical as global regulators continue to emphasise model transparency, traceability, and accountable decision frameworks.

Subtle but Urgent: Why Integration Is Becoming Time-Sensitive

The pressure to integrate fraud and AML is no longer purely strategic. It is becoming operationally urgent. Alert volumes are rising, typologies are converging, and regulatory expectations around holistic risk management are intensifying.

Banks that continue to optimise fraud and AML independently may achieve local efficiencies but still face systemic blind spots. Meanwhile, institutions that unify risk intelligence gain faster investigations, more consistent decisioning, and stronger governance narratives.

The competitive shift will not be defined by who adopts more AI.

It will be defined by who integrates AI more intelligently across financial crime domains.

A Balanced Path Forward for Banks

A practical integration approach does not require wholesale system replacement. Most banks can retain existing detection engines while introducing a unifying intelligence framework that enhances context assembly, supports governed decisioning, and strengthens cross-functional visibility.

This requires domain-aware frameworks, strong data interpretation capabilities, and a clear understanding of regulatory operating environments. With the right capability stack and implementation discipline, banks can move toward integrated financial crime operations without disrupting core compliance controls.

Institutions exploring this shift increasingly seek partners who combine financial crime domain expertise, AI capability, and governance-aligned frameworks rather than standalone model deployments. The emphasis is moving toward operationalising risk intelligence in a structured, regulator-conscious manner rather than experimenting with isolated AI tools.

Closing Perspective

Integrating AI across fraud and AML is not a technology trend.

It is an evolution in how financial crime risk is understood, investigated, and governed.

As risk signals become more interconnected, siloed interpretation becomes less defensible. Context, consistency, and explainability are emerging as the defining pillars of modern financial crime programmes. Banks that align fraud, AML, KYC, and sanctions intelligence within a unified analytical framework will be better positioned to manage complexity, reduce investigative friction, and strengthen regulatory confidence.

The future of financial crime operations will not be defined by separate systems working harder.

It will be defined by integrated intelligence working smarter.