At A Glance

- Most banks are not choosing between AI and automation. They are operating with both, often without clear boundaries

- Automation is effective in structured, repeatable processes but struggles with context and variability

- AI improves signal detection and prioritization, but fails when decisions cannot be explained or trusted

- High false positives in sanctions and AML are not just a detection problem, but a decision-making problem

- The real issue is not technology capability, but unclear ownership of decisions within workflows

- Leading institutions are moving toward workflow-first design, combining automation, AI, and human oversight

- Scalable systems treat AI as decision support, not decision replacement

Most institutions are not choosing between AI and automation. They are inheriting both.

Across banking operations, automation has been in place for years.

Rule engines, workflow systems, and RPA have been used to structure processes, reduce manual effort, and enforce consistency. More recently, AI has been introduced to address the limits of these systems, particularly in areas where rules begin to break down.

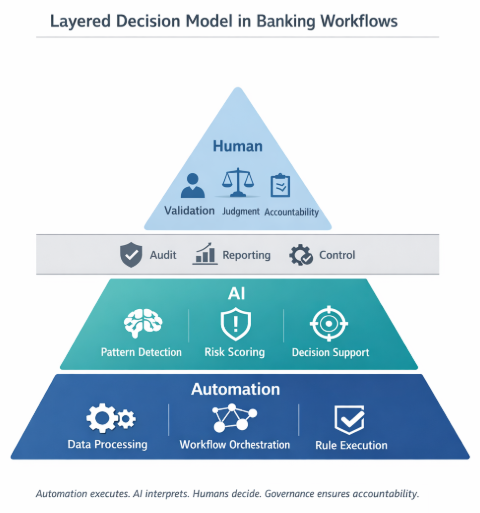

This has created a layered environment.

Automation handles structured tasks.

AI is expected to handle ambiguity.

In theory, the distinction is clear.

In practice, it is not.

Many organizations are now operating with both, without a clear understanding of where one ends and the other begins. That ambiguity is where inefficiencies and risks begin to surface.

Automation works best where the world is predictable

Automation excels in environments where the rules are stable and the process is repeatable.

In banking workflows, this includes:

- data extraction and validation

- workflow routing and case assignment

- rule-based alert generation

- regulatory reporting processes

These systems are effective because they enforce consistency. They do not interpret. They execute.

But this is also where their limits begin to show.

In KYC workflows, for example, automation can streamline document intake and routing. But as soon as analysts encounter inconsistencies across incorporation documents, ownership structures, or fragmented data sources, manual intervention increases again.

Across most Tier 1 environments, analysts still move across multiple disconnected systems, manually reconciling data and rebuilding context at each step.

The process is automated.

The decision-making is not.

AI is introduced where rules stop working

AI is not replacing automation. It is being introduced to address the gaps automation cannot cover.

In areas such as transaction monitoring, KYC reviews, and sanctions screening, the challenge is not executing predefined steps. It is interpreting signals.

AI can:

- detect patterns across large volumes of data

- identify deviations from expected behavior

- prioritize cases based on multiple variables

In sanctions workflows, this becomes particularly visible.

Screening systems generate matches. But they do not make decisions. They do not distinguish context. They do not encode policy intent.

This leaves analysts to interpret every alert manually.

In many institutions, up to 90% of sanctions alerts are false positives, creating significant operational strain and inconsistency in L1 decisions.

AI can reduce this noise by introducing contextual analysis and prioritization.

But this capability introduces a different challenge.

👉 Automation scales volume. AI reduces noise. But neither, on their own, reduces decision risk.

Where automation begins to break

In many institutions, the limitations of automation are already visible.

Rule-based systems tend to produce high volumes of alerts, many of which are not actionable. As rules are added to improve coverage, complexity increases, often without improving precision.

Over time, this leads to:

- rising false positives

- investigator fatigue

- increasing cost of compliance operations

More importantly, these systems struggle to adapt. Changes require rule updates, testing cycles, and ongoing maintenance.

At scale, this becomes difficult to sustain.

Where AI begins to break

AI does not fail for the same reasons as automation.

Its challenges are less visible, but more consequential.

The most common issue is not performance, but trust.

If a system cannot clearly explain:

- why a decision was made

- what factors influenced it

- how reliable the output is

then it creates uncertainty.

In regulated environments, uncertainty translates directly into risk.

Investigators hesitate to rely on the system. Supervisors question its outputs. Governance teams struggle to validate decisions.

👉 If a decision cannot be explained, it cannot be defended. And if it cannot be defended, it will not be used.

This is why many AI systems stall at the L1 stage, especially in sanctions workflows.

Even when recommendations are accurate, analysts still need:

- policy references

- supporting evidence

- traceable reasoning

Without this, decisions cannot stand up to audit scrutiny.

The real mistake: treating this as a choice

The conversation is often framed as:

AI vs Automation

In practice, this framing is incomplete.

Banking workflows are layered systems, where structured processes and judgment-based decisions coexist.

Automation handles structure.

AI supports interpretation.

Humans retain accountability.

👉 Most compliance workflows are not failing because of technology. They are failing because decision ownership is unclear.

The challenge is not choosing between them.

It is understanding where each belongs.

What leading institutions are doing differently

Across Tier 1 banks, there is a shift away from technology-first decisions toward workflow-first design.

Instead of asking:

“Should this be automated or handled by AI?”

They are asking:

“What part of this workflow is structured, and what part requires judgment?”

This is particularly visible in modern KYC architectures.

Rather than replacing systems, leading institutions are treating KYC as a composable stack, where:

- orchestration layers manage workflows

- AI agents handle specific tasks (document extraction, UBO resolution, adverse media analysis)

- human-in-the-loop controls remain central

- governance layers ensure auditability and control

This approach allows incremental adoption without disrupting existing systems.

A more useful way to think about it

Rather than comparing AI and automation directly, it is more useful to think in terms of failure points.

Automation fails when the world becomes too complex for rules.

AI fails when decisions cannot be explained or trusted.

Understanding these boundaries is what allows systems to scale.

From what we’ve seen across implementations in banking environments, the most effective systems are not those that maximize the use of one approach, but those that balance both within the same workflow.

Where this becomes real

In practice, this is less about technology selection and more about system design.

How does an alert move through the workflow?

Where is data structured, and where is it interpreted?

At what point is a decision made, and by whom?

Across implementations in AML, KYC, and sanctions workflows, these questions determine how AI and automation interact.

At LatentBridge, much of the work in this area has focused on bridging exactly this gap.

Not replacing automation with AI, but introducing a decision-support layer between systems and outcomes, where:

- alerts are enriched with contextual reasoning

- recommendations are supported by policy references and evidence

- decisions are structured and traceable from the outset

This is what allows systems to move beyond pilot and operate under real regulatory conditions.

Where to start

For most teams, the challenge is not identifying whether AI or automation is needed.

It is understanding how existing workflows need to evolve before either can be applied effectively.

In practice, that often begins with a simple question:

Where in your current process are decisions being made, and how are they being supported today?

In our experience, mapping this clearly across workflows such as AML, KYC, and sanctions is often the first step toward identifying where automation is sufficient, where AI adds value, and where human judgment remains critical.

For teams exploring this transition, a structured review of existing workflows and decision points can provide a more grounded starting point than jumping directly into tools or models.

👉 If this is something you're currently working through, we’d be happy to walk you through how we approach this in practice.

Closing thought

Automation brought consistency to banking operations.

AI is introducing adaptability.

Neither is sufficient on its own.

The systems that scale are those that combine both, in a way that reflects how decisions are actually made, reviewed, and governed.

👉 The real shift is not from automation to AI. It is from task execution to decision systems.

That is where the real value lies.